The PlayStation 2 (PS2), launched by Sony Computer Entertainment in March 2000 in Japan and later that year worldwide, stands as a monumental console in gaming history, selling over 155 million units. Its remarkable success, however, was built upon a foundation deeply rooted in the analog television technology of its era, primarily the Cathode Ray Tube (CRT) display. Unlike modern digital systems designed around fixed pixel grids and high resolutions, the PS2, like its predecessors, was conceived with scanlines and video timing as its core output philosophy. While a niche official PS2 Linux toolkit allowed for VGA monitor connectivity and VESA display modes, this was an ancillary feature, rarely utilized by commercial game developers. Understanding the PS2’s intimate relationship with CRT technology is crucial to appreciating its unique hardware architecture and the lasting impact it had on game development, visual fidelity, and regional gaming experiences.

The Graphics Synthesizer (GS) and its Unique Architecture

At the heart of the PS2’s visual prowess lay the Graphics Synthesizer (GS), its dedicated GPU, which featured a mere 4MB of embedded VRAM. This memory capacity was often insufficient to hold a full 640×480 framebuffer, let alone larger resolutions. Sony’s messaging to developers encouraged them to view this VRAM not as a conventional frame buffer but as a highly optimized scratchpad, emphasizing its immense bandwidth rather than raw capacity. This philosophy dictated many development choices. The GS boasted an unparalleled internal bandwidth, making operations like alpha blending, multipass rendering, and framebuffer copies—tasks that typically bottleneck other GPUs—remarkably efficient. Games such as Driv3r famously exploited these strengths, performing visual effects that would cripple less optimized hardware.

Further enhancing the PS2’s graphics pipeline were its two Vector Units, VU0 and VU1. These SIMD (Single Instruction, Multiple Data) coprocessors provided a fully programmable geometry pipeline, enabling hardware features akin to modern mesh shaders, concepts that only began to reappear in PC GPUs like the Nvidia GeForce RTX 20 series nearly two decades later. This sophisticated architecture allowed developers to offload complex geometric calculations, freeing up the main CPU (Emotion Engine) and contributing to the PS2’s distinctive visual style and performance capabilities despite its limited VRAM. The symbiotic relationship between the Emotion Engine, the GS, and the Vector Units created a powerful, albeit challenging, development environment that rewarded deep understanding of the hardware.

The 60fps Imperative: Interlacing and Frame Pacing

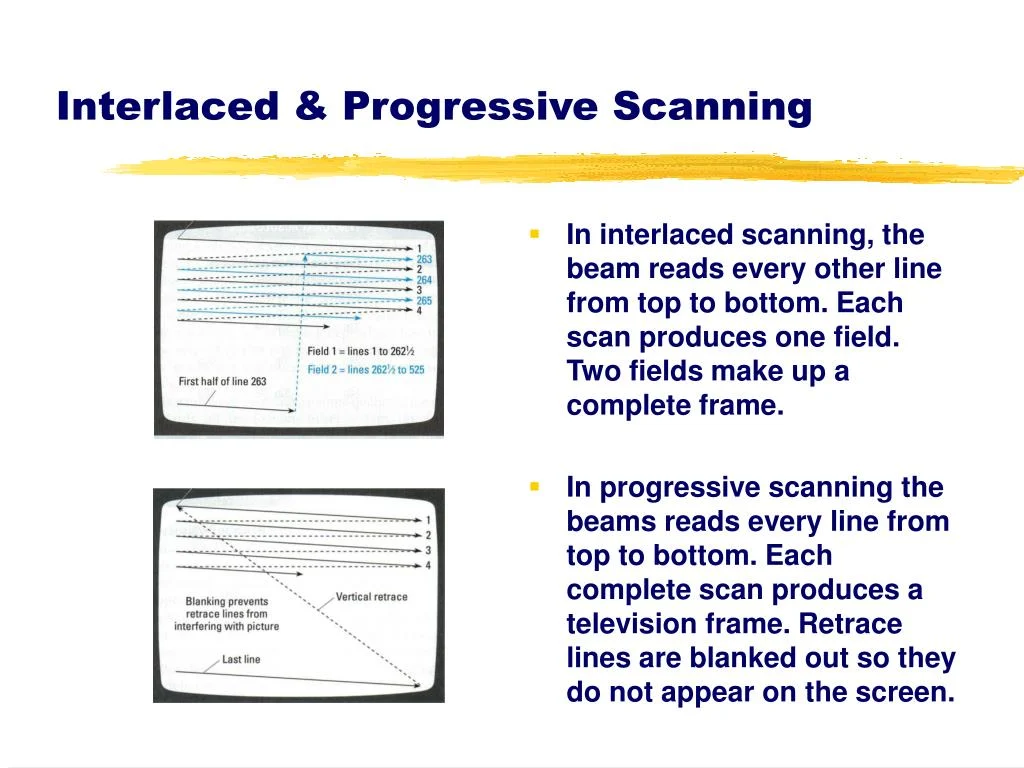

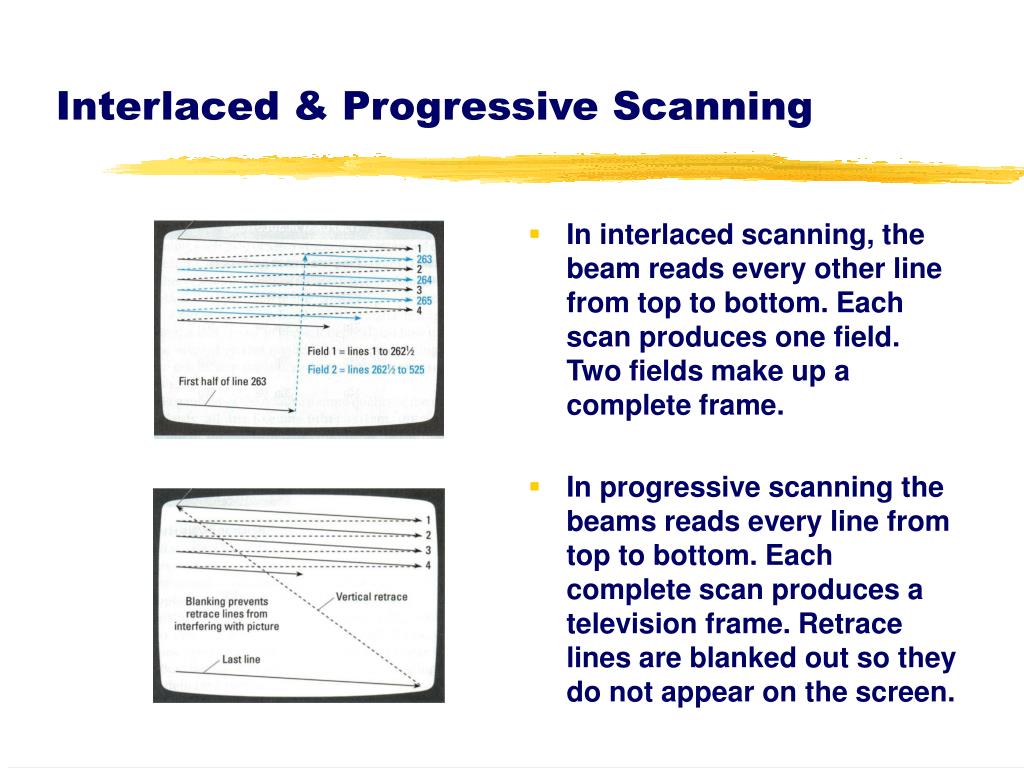

The PS2’s hardware design, perhaps inadvertently, strongly incentivized developers to target a stable 60Hz/60 frames per second (fps) for NTSC regions (or 50Hz/50fps for PAL). Early versions of the PS2’s Software Development Kit (SDK) primarily supported interlaced scanline modes, requiring a 60Hz refresh rate to achieve resolutions like 640×448. Later, developers gained the flexibility to choose between "frame mode" (rendering full frames) or "field rendered mode" (rendering interlaced fields).

Field rendering, by its nature, halves the memory requirements per frame, displaying images at effective resolutions like 640×240 or even 512×224. This reduction was critical given the GS’s 4MB eDRAM limitation for storing framebuffers. It also significantly decreased the time needed to render each output image, making it an attractive option for achieving higher performance. However, this efficiency came with a critical caveat: frame drops. If a game missed its target and the previous frame had to be displayed twice, the entire image would visibly shift vertically by one scanline. This jarring "Y-shift" made maintaining a consistent 60fps paramount. To mitigate this, many games, like SSX 3, would internally slow down gameplay by skipping render frames when performance dipped, rather than allowing the visual artifact to occur, thus preserving the illusion of a smooth 60Hz output.

Conversely, frame mode, while rendering full frames (e.g., 640×448 or 512×448), demanded more rendering time and thus made a consistent 60fps target harder to achieve. However, it was more forgiving of missed frames; if a new frame wasn’t ready, the screen would simply display the second field from the previous full frame without the disruptive Y-shift. For developers who could achieve a consistently frame-paced 60fps, field rendering on a CRT TV provided a visually acceptable output. The CRT’s analog nature would blend the half-frames, making them appear as a cohesive full image to the average user, who was largely unaware of the intricate internal workings. This reliance on CRT characteristics allowed the PS2 to leverage faster, lower-resolution rendering techniques without severe visual degradation, contributing to its reputation for having a large library of 60fps titles, especially at launch. This technical constraint effectively forced developers to prioritize stable framerates, setting the PS2 apart from its contemporaries, such as the original Xbox and GameCube, where framerates often fluctuated more widely.

Early PS2 games often faced criticism for "jaggies" (jagged edges) and a perceived lack of anti-aliasing, particularly when compared to the Sega Dreamcast. This issue was exacerbated by early game magazines and journalists who, using single-frame capture methods, would only capture half the interlaced fields in their screenshots. This resulted in print media depicting PS2 games as significantly more jaggy than they appeared on an actual CRT display, where the interlacing and analog blending masked some of these imperfections. The lower effective output resolution, necessitated by the 4MB GS eDRAM, also contributed to these misunderstandings.

Widescreen’s Emergence: CRTs and the PS2’s Aspect Ratio Challenges

The PS2 era marked a pivotal shift in television technology, with widescreen 16:9 CRT TVs gradually entering mainstream adoption in the early to mid-2000s, largely fueled by the PS2’s integrated DVD player functionality and the rising popularity of anamorphic widescreen movies. While the vast majority of console games prior to the PS2 were designed for the traditional 4:3 aspect ratio, the PS2 catalyzed the move towards widescreen gaming.

Developers typically implemented widescreen in three ways:

- Hor+ (Horizontal Plus): The game renders more of the world horizontally, expanding the field of view without cropping vertically. This is generally considered the "correct" widescreen implementation.

- Vert- (Vertical Minus): The game crops the top and bottom of the 4:3 image, effectively zooming in and reducing the vertical field of view to fit a 16:9 aspect ratio.

- Hor+ and Vert-: A hybrid approach where the image is both horizontally expanded and vertically cropped.

The majority of PS2 games, due to resource constraints and development ease, opted for Vert-. This method allowed developers to simply crop the top and bottom portions of the existing 4:3 render, then slightly zoom the remaining image to fill a 16:9 frame. This approach minimized the computational overhead, as the GS’s zoom/scaling capabilities were "free," and it ensured the image still fit within the limited 4MB GS VRAM. Iconic series like Ratchet & Clank and Jak and Daxter, along with titles like Tekken 5, employed Vert- widescreen modes. In Tekken 5, for instance, characters would appear noticeably larger than in 4:3 mode, and portions of the environment would be missing from the top and bottom of the screen.

Implementing true Hor+ widescreen required careful consideration of system resources and VRAM usage. Expanding the horizontal field of view necessitated a higher horizontal resolution to maintain image quality, which directly challenged the PS2’s reliance on CRT blending to compensate for lower-than-average resolutions. This often meant a compromise in other areas or a more significant development effort. For enthusiasts, the prevalence of Vert- implementations on sixth-generation consoles was often a source of frustration, as it failed to fully leverage the benefits of increased screen real estate. Modern emulation projects and community patches often address this by restoring proper Hor+ widescreen modes, allowing players to experience these games with an expanded field of view.

Progressive Scan: A Glimmer of Digital Clarity on Analog Displays

The PS2’s release coincided with the twilight years of the CRT TV. As the industry prepared for the advent of High-Definition Television (HDTVs) and LCD panels around 2005, manufacturers attempted to push the boundaries of CRT technology with Enhanced-Definition Television (EDTV) or Extended Definition Television. These progressive scan-capable CRTs, appearing around 2001, could support 480p (NTSC) and 576p (PAL) signals.

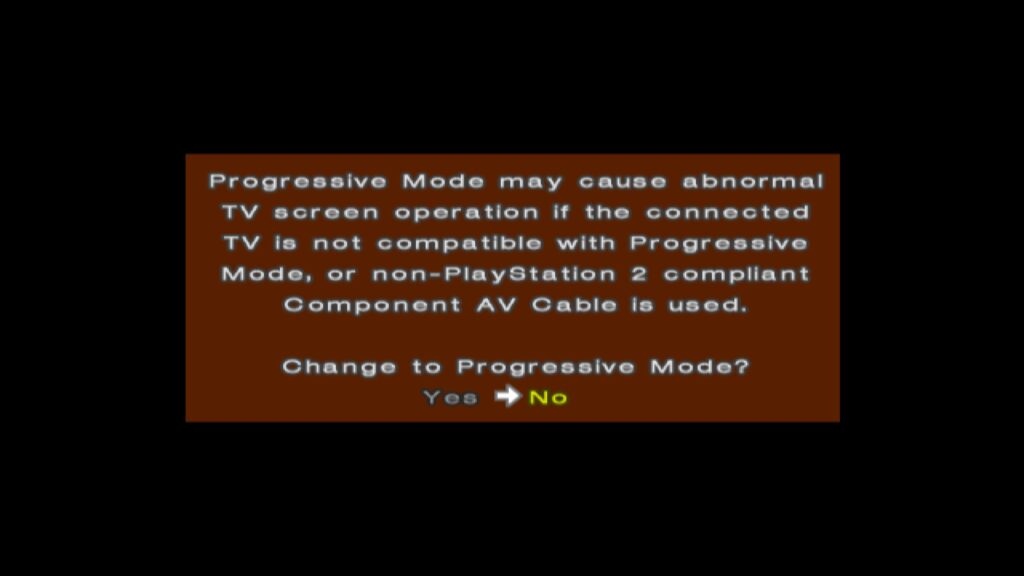

Progressive scan modes offered non-interlaced, full-frame display, eliminating the common interlacing artifacts and providing full-height backbuffers. To utilize these modes, users typically needed higher-quality video connections: component cables for NTSC regions or RGB SCART cables for European and Japanese markets, as composite and RF-AV cables lacked the necessary bandwidth. Many PS2 games supporting progressive scan allowed users to activate it by holding specific button combinations (e.g., X and Triangle) during startup, prompting a choice between standard and progressive display modes.

However, progressive scan also presented its own set of trade-offs. To accommodate the larger framebuffer requirements of a full progressive frame within the 4MB GS eDRAM, some games reduced the framebuffer depth to 16 bits per pixel (16bpp) or even lower. This trade-off, while eliminating interlacing artifacts, could introduce color banding or a slightly less vibrant final image. Despite this, for most users, the benefits of progressive scan—sharper images and reduced flickering—outweighed these minor visual compromises.

Notably, a few ambitious titles like Valkyrie Profile 2 and Gran Turismo 4 even offered "1080i" modes. This was somewhat misleading, as the PS2 was not rendering at a true 1920×1080 resolution. In Gran Turismo 4, for example, the internal render resolution was 640×540. The GS’s Cathode Ray Tube Controller (CRTC) then digitally magnified this image to "1920×1080" using a horizontal magnification integer (MAGH) of 3 (640 3 = 1920) and a vertical magnification integer (MAGV) of 2 (540 2 = 1080) or an interlaced framebuffer switch. While this "zoom scaling" likely appeared convincing on a CRT at the time, on modern displays, the true 480p progressive scan mode often provides a cleaner, more authentic image.

Regional Disparities: Europe, PAL, and the 50Hz/60Hz Divide

European gamers faced unique challenges due to the PAL television standard, which operated at 50Hz, in contrast to the 60Hz NTSC standard prevalent in Japan and North America. This difference meant that many early PAL conversions of PS2 games ran 16.9% slower than their NTSC counterparts and often featured "letterboxing" (black bars at the top and bottom) to compensate for PAL’s higher vertical resolution (e.g., 576i vs. 480i) which often went unused by developers.

By the time the PS2 launched, the Sega Dreamcast had already established a precedent by offering PAL 50Hz and PAL60 (60Hz) modes, allowing European players to experience games at their intended speed on compatible TVs. However, Sony initially refused to officially support PAL60 for the PS2, considering it a non-standard. Consequently, most PS2 launch titles in Europe lacked 60Hz options, leaving players with slower, often letterboxed 50Hz versions.

While some UK-based developers like Psygnosis (Wipeout) and Core Design (Tomb Raider) were historically adept at optimizing for PAL, often delivering higher scanline counts and thus better image quality than NTSC versions (albeit still at 50Hz), the fundamental speed difference remained. Some developers attempted to tweak game speed to compensate, but the experience was generally inferior to a native 60Hz presentation.

The situation gradually improved around 2002, as more games began offering a choice between 50Hz and 60Hz modes at startup, exemplified by titles like ICO. Rather than utilizing a PAL60 signal, the PS2 would switch to an NTSC 480i mode for 60Hz output. This was largely compatible with European televisions, as many sets sold in the late 1990s and early 2000s supported both PAL and NTSC signals. Developers who couldn’t implement these toggles, such as those behind Silent Hill 2 and Metal Gear Solid 2, typically invested more effort into their 50Hz PAL conversions to avoid letterboxing. However, the integration of 50Hz/60Hz toggles was not without its difficulties. Developers like Square, known for their cinematic, FMV-heavy games, found it challenging to accommodate separate 50Hz and 60Hz versions of their large, high-quality full-motion video sequences on a single DVD, leading some titles like Final Fantasy X to remain 50Hz despite growing demand for 60Hz. Over time, games lacking these crucial 50Hz/60Hz options became the exception rather than the rule.

Furthermore, progressive scan modes were sometimes stripped from European versions of games (e.g., God of War 2, Soul Calibur 3), likely due to the lower adoption rates of progressive scan-capable TVs in the region compared to NTSC territories.

The Shift to LCDs and HDTVs: A Rough Transition for the PS2

The mid-2000s witnessed a seismic shift in display technology, as CRTs rapidly gave way to LCD and plasma HDTVs. This transition, which coincided with the launch preparations for the seventh generation of consoles (PlayStation 3, Xbox 360), posed significant challenges for older, CRT-centric systems like the PS2, which remained commercially viable until late 2007 in many markets.

For the new generation, the advantages were clear: the demise of PAL vs. NTSC regional differences, consistent 60Hz output via HDMI, and native non-interlaced high resolutions (480p, 720p, 1080i/p) as the default. Many consumers, who had never owned a progressive scan-capable CRT, experienced these sharper, flicker-free images for the first time.

However, early LCD HD-ready TVs were often plagued by poor image quality, high input latency, and noticeable ghosting artifacts. CRT-based consoles, particularly the PS2, looked especially poor on these nascent digital displays. Visual effects designed to leverage CRT characteristics, such as "feedback blur" for motion blur, which looked excellent on a CRT, appeared disastrous on early LCDs due to their inherent ghosting. Some games, like Soul Calibur 3, attempted to mitigate this with in-game settings like "Software Overdrive," designed to reduce afterimage effects on LCD screens, but these were often only partially effective.

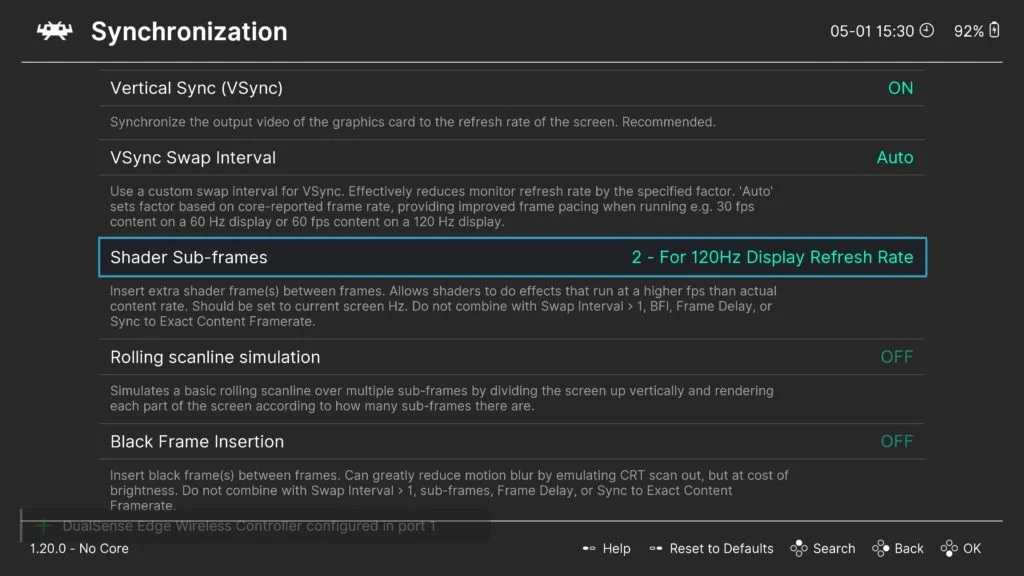

The fundamental issues of input latency and motion clarity remained unaddressed for years. These problems would persist for decades, only truly finding modern solutions with the advent of advanced display technologies and computational rendering techniques. Today, on cutting-edge OLED screens, players can finally experience near CRT-like latency and motion clarity through innovations like BlurBusters’ "CRT beam racing simulator" shader. When combined with sophisticated CRT shaders, this allows for an authentic, high-quality retro gaming experience that emulates the look and feel of a CRT on contemporary displays, breathing new life into the PS2’s classic library and demonstrating the enduring relevance of understanding its original design philosophy.